Super-resolution and de-noising on XMM-Newton images using deep learning - Machine Learning Group

Super-resolution and de-noising on XMM-Newton images using deep learning

Sam F. Sweere, Ivan Valtchanov, Maggie Lieu, Antonia Vojtekova, Eva Verdugo, Maria Santos-Lleo, Florian Pacaud, Alexia Briassouli, Daniel Cámpora Pérez

In recent years significant progress has been made in the field of de-noising and super-resolution using machine learning methods. Most of this research is done on images that were captured on earth or computer-simulated images such as games and art. The goal of this research is to use these ideas and develop deep learning-based methods for super-resolution and de-noising of images from ESA's X-ray space telescope XMM-Newton in order to increase their scientific value. The trained network could be used to improve the quality of the XMM-Newton images, both in terms of noise properties (de-noising) and higher spatial resolution (super-resolution). As the XMM-Newton Science Archive contains observations spanning about 20 years, there is a lot of data to test and train a model on. At the end of the day, improving the quality of the already available data is also of great interest to the astronomical community and the lasting legacy of the XMM-Newton.

Current progress:

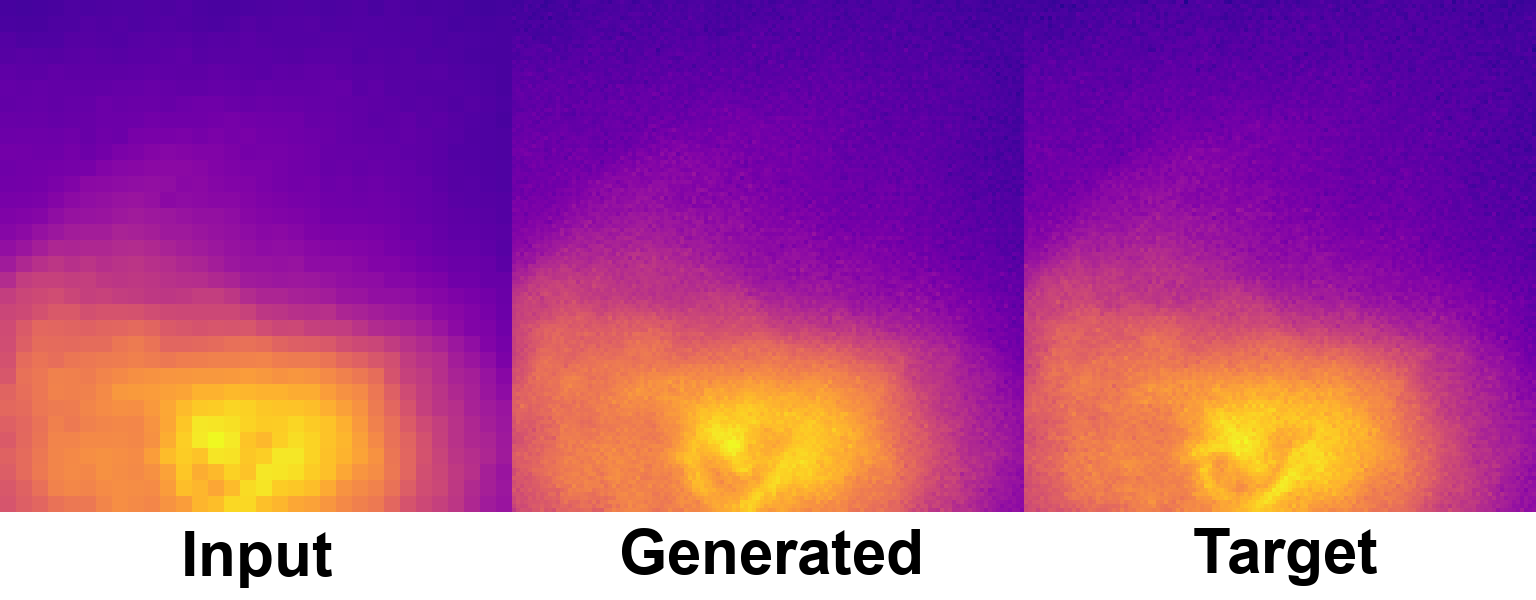

To train a super-resolution model we need low and high-resolution image pairs. An x-ray telescope with a higher spatial resolution than XMM-Newton is NASA's Chandra X-ray Observatory. However, the amount of good overlapping image pairs is limited and the X-ray images have different properties. Therefore we chose to use the SIXTE X-ray simulator to generate a synthetic high-resolution dataset of simulated XMM-Newton observations. Using IllustrisTNG galaxy formations simulations as the simulation input. This way we can create a big dataset to train the super-resolution model. To validate our results we increase the resolution of real XMM-Newton observations and compare them with the Chandara observations (with a higher spatial resolution).

We are currently experimenting with different deep learning models architectures such as CNN's (convolutional neural networks) and GAN's (generative adversarial neural networks).

Image 1: Super-resolution image of an IllustriusTNG x-ray simulation using a ESR-GAN based model. From left to right, 32x32 input, 128x128 generated super-resolution image and 128x128 ground-truth target image. This image is part of the validation set and has therefore not been used to train the model.